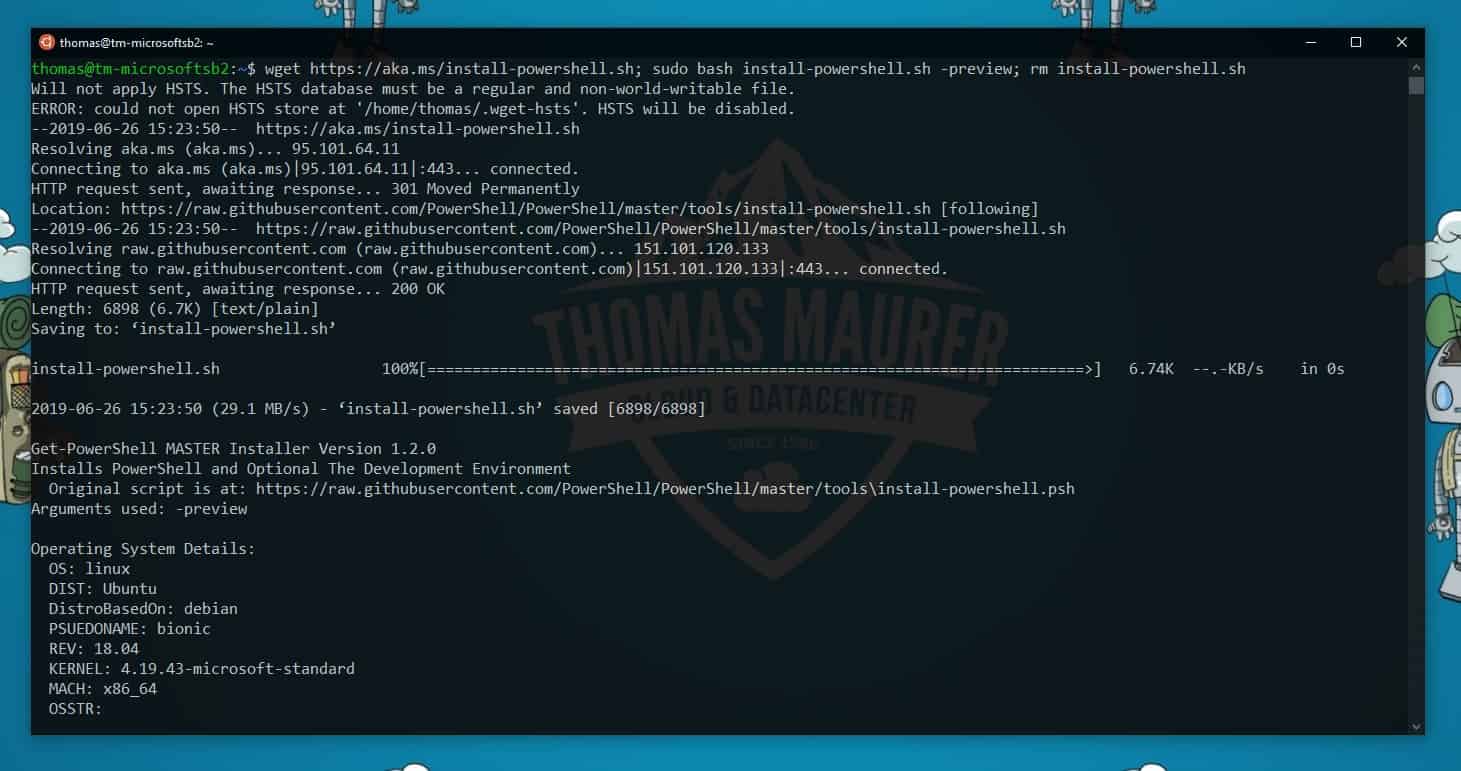

For simplicity’s sake, I have hardcoded the value in the top section of my script.Īs mentionned above, in my case, I have hardcoded the values of the parameters instead of prompting the user to enter them manually. Figures below show the execution of the script on my personal blog. Finally, my script will display some level of execution status out to the PowerShell console for every link crawled, it will display a new line containing information about the total number of links visited so far, the hierarchical level of the link (compared to the parent site), and the URL being visited. The script will also account for relative URLs, meaning that if a URL retrieved from a page’s content starts with ‘/’, the domain name of the parent page will be used to form an absolute link. The script will need to have a try/catch block to ensure no errors are thrown back to the user in the case where URLs retrieved from links are invalid (e.g. Using a combination of string methods, I will retrieve the URL of every link on the main page, and will call a recursive method that will repeat the same “link-retrieval” process on each link retrieved for the main page. Then, I will search through that content for anchor tags containing links (“ tags with the href property). Using the Invoke-WebRequest PowerShell cmdlet, I will retrieve the page’s HTML content and store it in a variable. My PowerShell script will start by making a request to the root site, based on the URL the user provided. The script should log pages that have been visited and ensure we don’t visit the same page more than once.

This PowerShell script should prompt the user for their credentials, for the URL of the start site they wish to crawl, for the maximum number of links the script should visit before aborting, and last but none the least, the maximum level of pages in the architecture the crawler should visit. I have decided to develop a PowerShell script to mimic a user access various pages on the site and clicking on any links they find. Even though the url’s were sequential, some were broken, and I needed a list of images with a sequential naming structure.I have a need to simulate traffic after hours on one of our SharePoint sites to investigate a performance issues where the w3wp.exe process crashes after a few hours of activity.

Wget powershell download#

I had the need to download a bunch of sample images from a sample image site. The easy and quick-and-dirty way to use ‘The Real’ Wget is to just put the executable somewhere and reference it directly in your script file. Because, who would be stupid enough to use Wget on Windows, right? All the command line options should be the same however. The problem that you often run into is when you search for sample scripts, is that you mostly get samples using Linux Bash.

Wget powershell windows#

To confuse you more, there is also a Windows binary available of GNU Wget(the one you’re familiar with from Linux) for Windows. This is because the Wget command in Powershell on windows is just an alias for Microsoft’s own Invoke-WebRequest.

If, like me, you later on tried to use it in Windows Powershell, you might have been surprised that it did not behave like you expected it to. If you’ve ever used a Linux distro before (or saw The Social Network) you’ll know about Wget and how powerful it can be. This time however, the focus is Wget on Windows instead of Sitecore Powershell. Continuing the trend I set in the last few post, I’ll be sharing another Powershell script in this post.